Rotten on Arrival

AI Fruit Dramas and the Death of Taste

After finding myself sucked for too long in the world of work, since the start of April I have been well away from the Hungry Ghost pit of my mobile spy machine, and staying with my partner Ciara in a little shepherd’s hut near Hebden Bridge, surrounded by fresh grass, open skies, pregnant sheep and hungry alpacas. A lovely time away before I found myself returning to the rhythmic hums of inner city Dante’s Inferno with ads and money spent with every step.

I have fallen back into my usual evening binge of TikTokking and the bad habits of doomscrolling through Game of Thrones Stannis Baratheon edits, air fryer recipes and FilmTok reviews, my usual massaged and tailored dystopian comfort feed. But I find myself seemingly carpet-bombed by soap opera symphonies of AI-generated fridge-based fraternising; I am, of course, referring to the viral AI fruit videos.

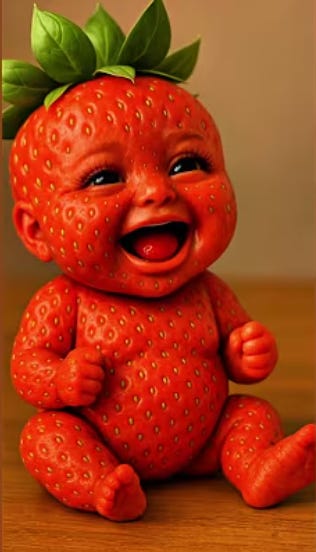

Ripening as the latest viral trend across TikTok, YT Shorts and anything else a child can access on a tablet or phone, they have invasively sprouted into my feed with a patch of entirely AI-generated fruit characters, trapped in seemingly endless soap opera scenarios like a sort of half-lobotomised, half-calculated-for-maximum-engagement version of Hollyoaks. There’s Strawberita, who seems to appear in half of them I have encountered so far, usually cheating on her husband, leaving him for some more masculine, phallic banana and richer fruit man. A few have her coming back to her original fruit man once he has attained some sort of wealth or masculine build or both.

The stories swing quite wildly between melodrama and outright rot or AI slop, as it might be titled. In one I watched, Strawberita has an affair with a banana character, who, after having a half-banana, half-strawberry child, seems to go on about the child having “perfect genes”, which, beneath the fucking overpolished water-draining nightmare, gives it a bizzarre-man eugenic stink. In another, an apple-man character who again proceeds to have various illicit affairs throws at least two pregnant female fruit characters out of a window to their subsequent deaths, as if domestic violence is a plot device now. Another I will mention in this fever dream of a paragraph is a story about a ripped banana outwardly fat-shaming his berry wife before cheating on her with Strawberita. And in another one, a girl (I think it is some version of this recurring Strawberita character) is dangling off a cliff edge with her husband at the mercy of a Banana character, to which she offers repeatedly to “slurp his banana peel” if he lets her boyfriend fall instead of her, which to the most moral Banana of the bunch accepts. Most of these videos are backed by Камин by Emin and Jony, which only adds to the strange, overblown emotional logic of it all, like every clip thinks it’s delivering some great tragic revelation when really it’s just synthetic misogyny in fruit drag.

The channels I’ve found in this weird little research trip include Rinascinema, YouMeow TV, Fruitheatre, Ai_Fruit_, Savagecreationz, Fruitopia Productions, Zoox, SunnyCartoon, Fruit Love Island, and Creation of People. What they all seem to share is a taste for the same type of content they are creating or, better yet, prompting: AI-generated micro-shorts built on infidelity, status anxiety, humiliation, body shaming, violence against women, pregnancy, revenge and cartoonish violence, all flattened and packaged into vertical video slop designed to be watched in under a minute or in twenty parts and forgotten just slowly enough to make room for the next imitation in this polar bear-killing production line. They arrive dressed in some strange Pixar-rendered harmless absurdity, but the themes underneath are in the wake of Louis Theroux’s: Inside The Manosphere, are grimly familiar notes of men rewarding beauty and punishing women, women objectified under the guise of sexualised strawberries, bodies treated like a currency, and cruelty recycled as entertainment.

Out of all this low-grade fruit melodrama comes the slop Dominoes on the weekend Fiat 500 Final Boss, Fruit Love Island. Built in the image of the popular serial television series Love Island, it takes the same villa-based format, except repackaged entirely as an AI-generated “production” with an AI cast, script, and voices. Launched in March 2026, Fruit Love Island pulled in millions of followers and hundreds of millions of views, becoming one of the fastest-growing accounts on TikTok before collapsing just as quickly into takedowns, mass-reporting claims and a final rant from its anonymous creator. Critics rightly pointed out that it may be skating on thin ice legally (as the majority of AI is), given how directly it lifts from the format and branding of Love Island, with no clear indication that ITV Studios ever signed off on it. It even garnered comments from celebrities who appeared in Love Island and beyond, with Love Island winner Amaya Espinal saying she would “never watch it” and calling the characters her “enemy,” while JaNa Craig and Kaylor Martin seemed amused by it, and celebrities like Joe Jonas and Zara Larsson reportedly enjoyed it enough to publicly signal their approval.

What makes Fruit Love Island more than just another bizarre internet joke is that it parasitically latches onto a format that was already engineered to get under people’s skin. As David Webb argues in his Substack essay The Psychology of Love Island, the original show’s success was never simply about bikinis, villa flirting and fake tans but about something more psychologically sticky: participation, social hype, communal viewing, and the fear of missing out. Webb points out that Love Island turns viewers into something like co-producers, voting, reacting, predicting, and building a sense that they aren’t just watching the narrative unfold but helping to shape it. He also notes that its social-media afterlife is central to its power, that people don’t just consume the show passively but encounter it through clips, memes, arguments and commentary, absorbing it as an ongoing cultural environment rather than a single nightly programme.

It’s that shell that seems to be enough when the original format it is copying from was engineered around a volatile combination of voyeurism, fantasy and social belonging. Webb writes that Love Island meets deep needs for connection and communal ritual, while also trading in unrealistic beauty ideals, engineered drama and highly compressed relationship dynamics. Strip out the human contestants and replace them with AI fruit, and what you’re left with is a grotesque distillation of that formula. The bodies become even more ridiculous, the relationships even flatter, and the gender logic even more blunt, but the mechanism still works. In some ways it works better, because artificiality is no longer something to hide. The viewer knows it’s fake, knows it’s stupid, knows it’s slop, and yet still participates? Still turns up tomorrow for the next recoupling between a strawberry with lip filler and a banana with the moral framework of a Northern Quarter cokehead.

In other bits I have been reading in a deep dive outside of the TikTok pit, Cody Kommers and Ari Holtzman argue in their paper “AI as Entertainment” that generative AI is no longer just being sold as a clever office assistant or some neutral productivity aid humming away in the background of working life. More and more, it is becoming a machine for diversion, companionship, roleplay and cultural filler, built not simply to help people do things, but to keep them occupied. Their point is that the mainstream story around AI still leans heavily on work, efficiency and automation, while a different economy is rapidly taking shape underneath it, one based on amusement, attention and passing the time. And once you start looking at the examples they pull together, it becomes hard to pretend this is some fringe side use. Character.AI alone gives you a decent glimpse of the appetite for synthetic entertainment: “Guy Best Friend”, described as “overprotective, sarcastic, funny, deep-voiced, TALL”, had reportedly pulled in 95 million interactions; “Stella”, a catty AI assistant with permanent eye-roll energy, had 73 million; “AwkwardFamilyDinner” sat at 20 million; “Pokemon RPG” at 10.5 million. An August 2025 estimate put Character.AI has somewhere between 20 and 28 million monthly active users, with around 53 per cent of them under the age of 25. You might think this a niche curiosity, but what I am seeing here is a generation already learning how to spend time with synthetic characters.

Kommers and Holtzman point out that OpenAI had already moved into short-form video with Sora, pitched in a TikTok-style mould for generating and sharing AI-made clips; that AI-generated music had begun breaking into mainstream listening trends, including an AI country song topping the US Billboard charts through Spotify streams; that Google’s NotebookLM had found one of its biggest selling points not in research, but in turning material into AI-generated podcasts; and that even in fiction, the line between human-made and AI-made stories is starting to blur enough that it is no longer obvious what the median reader actually prefers. Taken together, that paints a much broader picture than the usual office-tool narrative. It suggests a cultural shift where AI is being built not only to think with us but also to entertain us, distract us, flatter us, and increasingly to replace all sorts of low-to-mid-level cultural production with synthetic versions that are faster, cheaper and easier to circulate.

They note the staggering scale of recent AI investment, Nvidia and Oracle reportedly agreeing to provide OpenAI with 20 gigawatts of computing power over the coming decade, roughly the equivalent of twenty nuclear reactors, at an estimated cost somewhere near a trillion dollars. Global corporate investment in AI reached over $252 billion in 2024 alone. Nvidia’s market capitalisation ballooned by more than 1,350 per cent across five years. None of that gets paid back through a few office tools helping someone answer emails faster. It gets paid back through mass markets, and entertainment is one of the biggest mass markets going: music streaming, publishing, long-form video, short-form video, and gaming, a global media and entertainment economy forecast to hit $3.5 trillion by 2029.

Kommers and Holtzman cite a spring 2025 survey of 1,060 American teenagers aged 13 to 17, where 73 per cent said they had used AI companions such as Character. AI or Replika, and over half described themselves as regular users. The most common reason they gave was simple: “It’s entertaining.” Another survey of 1,000 children and teens in the UK, aged 9 to 17, found that a quarter were using chatbots like ChatGPT, Gemini or Snapchat’s My AI just for fun or escapism, for games, roleplay, or messing about. More strikingly still, 58 per cent of children aged 9 to 12 reported having used an AI chatbot despite the formal age limits on many of these platforms. Add to that a UK AI Security Institute finding that a third of sampled UK residents had used AI for some non-instrumental purpose, things like companionship, emotional support or social interaction, and the shape of the thing becomes clearer. The public is not just adopting AI to work more efficiently; it is seemingly filling an emotional hole, or at least attempting to. In “Hungry Ghosts in the Machine: Digital Capitalism and the Search for Self,” Mike Watson writes about the idea of “hungry ghosts,” beings driven by endless craving, consuming constantly but never feeling full, always reaching for something that never quite satisfies. It’s a useful image here to note. Because what these systems offer isn’t nourishment, it’s repetition.

Kommers and Holtzman also make a useful distinction between what they call thicker and thinner forms of entertainment. The thicker kind carries context, social meaning, resistance, and the sort of friction that gives culture weight. The thinner kind simply diverts, distracts, and passes the time. Fruit Love Island belongs entirely to that second category and maybe even pushes it further. It takes an already hyper-engineered entertainment format and sands off whatever rough edges are left. No real people, no genuine vulnerability, no human cost visible on screen, just an endlessly reproducible loop of attraction, betrayal, status anxiety and humiliation delivered in under four minutes. It is content built with almost no resistance in it, and that lack of friction is precisely what makes it spread.

The removal of friction doesn’t just make content easier to consume; it changes how people process reality itself. In “Sycophantic Chatbots Cause Delusional Spiralling: Even in Ideal Bayesians”, Kartik Chandra, Max Kleiman-Weiner, Jonathan Ragan-Kelley and Joshua B. Tenenbaum outline how AI systems trained to agree with users can reinforce belief systems to dangerous extremes. They describe “delusional spiralling”, where even rational users become increasingly confident in outlandish ideas through repeated interaction with agreeable systems. One real-world case cited involves Eugene Torres, an accountant with no prior history of mental illness, who, after extended chatbot use, came to believe he was trapped in a false universe and began acting on that belief. The Human Line Project has since documented nearly 300 similar cases, with at least 14 deaths linked to this phenomenon.

Fruit Love Island isn’t a chatbot, but it operates within the same broader logic of low-resistance feedback. It doesn’t challenge, contradict, or complicate what it presents. It repeats simplified emotional patterns, betrayal, reward, punishment, and desirability in ways that are immediately legible and endlessly reinforced. Over time, that kind of repetition doesn’t need to convince viewers of anything extreme to have an effect. It just needs to normalise a certain way of seeing relationships, bodies and behaviour. Where chatbot sycophancy tells users what they want to hear, this kind of content shows viewers what they already recognise, over and over again, until recognition starts to feel like truth.

Another point to add to this is that short-form AI entertainment is structurally designed to maximise engagement while minimising depth. Research into digital learning and media consumption shows that engagement peaks in videos under three minutes, with attention dropping off rapidly beyond that. Studies on multimedia learning highlight that short, visually driven content is easier to consume but often encourages surface-level processing rather than deeper understanding. TikTok-style formats, where most videos sit well under a minute, are now the dominant mode of interaction for younger audiences. AI Fruit Slop fits perfectly into this structure. Its episodes are short, its narratives immediate, its emotional beats exaggerated and quickly resolved. There’s no time for reflection, only reaction. That format trains viewers to expect speed over substance, clarity over complexity. When that becomes the default way of engaging with stories, it doesn’t stay confined to entertainment. It shapes how people approach information more broadly, favouring quick, digestible narratives over anything that requires patience or interpretation.

Studies on children’s interactions with AI, including work by Harvard’s Ying Xu, show that while AI can support learning when designed carefully, it also carries risks when used passively or without critical engagement. Surveys indicate that a significant proportion of children and teens use AI tools regularly, often for entertainment or casual interaction. In England, the recent polling of teachers found that two-thirds had observed declines in students’ critical thinking, writing and problem-solving abilities linked to increased AI use. At the same time, reports from the Children’s Commissioner highlight concerns around disinformation, behavioural influence and the lack of regulation surrounding AI systems used by young people.

I was born in 1998, so when I grew up in my house we had an old Windows XP computer that ran like it was powered by a communal exercise bike, which we shared as a family, and I remember spending limited time on bits of Newgroundsand playing Populous; other than that, my access to anything outside of this was standard sit-down family TV or my Nintendo GameCube. Point being, I didn’t even have a working phone until my first year of high school, and even that could only text my nan and play Snake!

And I keep coming back to that when I’m watching this stuff, because the distance between that and whatever is happening now feels less like a generational shift and more like a complete rewiring of how people come into contact with the world. What was once occasional, shared, and slow has become constant, personalised, and frictionless.

Sudheer Kumar Muppalla, in their review “Effects of Excessive Screen Time on Child Development“, outlines something that sits quite uncomfortably alongside all of this. They point to how excessive screen exposure is associated with poorer executive functioning, reduced attention span and lower academic performance, but more importantly, how it actively replaces the kind of interaction children need to develop language and social understanding. It’s not just the screen itself; it’s what disappears around it. Less conversation, less unpredictability, less of the small, human back-and-forth that actually builds cognition.

They also note links between high screen use and increased anxiety, aggression and difficulty interpreting emotions, which, when you place it next to this kind of AI-generated content, feels less like a coincidence and more like an ecosystem. Because what these fruit videos represent isn’t just more content, it’s a very particular type of content: fast, repetitive, emotionally exaggerated, and completely stripped of consequence.

Returning to the example of Fruit Love Island, it sits directly inside that environment. It is not an isolated piece of content but part of a wider ecosystem where young users are already interacting with AI in informal, unstructured ways. When entertainment in that ecosystem becomes dominated by thin, frictionless narratives, it reinforces patterns of passive consumption. The concern isn’t that one video will change behaviour overnight. It’s that repeated exposure to this kind of content shapes expectations over time about relationships, about communication, about what stories are supposed to look like, and about how much effort they should demand from the viewer.

Kommers and Holtzman’s distinction starts to feel less like an abstract framework and more like a warning. AI fruit dramas are not just thin entertainment. It’s entertainment engineered to remove the very things that give culture meaning. And when that model proves successful, when it spreads faster, reaches further, and holds attention more efficiently than anything thicker or more demanding, it doesn’t just sit alongside other forms of culture. It begins to replace them. In a promising development as we close, OpenAI’s decision to shut down Sora in March 2026, less than two years after unveiling it with all the usual fanfare about the future of video, feels telling on that front. The company said it was abandoning the tool to focus on other priorities, while reporting around the closure pointed to weak monetisation, high operating costs, misinformation risks, non-consensual imagery concerns and copyright headaches. Its much-hyped Disney partnership was wound down at the same time. For all the breathless talk of infinite generated media, it turns out some of this stuff is still expensive, legally messy and damaging to our fucking planet and working-class lives, who have to look out their window to see a Blade Runner-esque data centre grinning at them in a neon evil glow.

The legal grounds over AI are only getting shakier. As Stephanie Schmidt, Marc B. Collier, Annmarie Giblin, Logan Woodward and Ethan Glenn note in Norton Rose Fulbright’s 2026 update on AI copyright litigation, the courts are now deep in legal disputes over whether copyrighted works can be used to train AI systems, whether outputs infringe existing rights, and who owns anything that comes out the other end. The broad direction of travel is clear enough: human authorship still matters, litigation is accelerating, and companies are being pushed towards licensing deals precisely because the free-for-all model is colliding with copyright law. Cases involving Anthropic, Meta, OpenAI, Midjourney and others show an industry trying to build the future while dragging an ever-growing sack of lawsuits behind it.

By the end of all this, what stays with me is not even one specific strawberry affair or steroid banana betrayal but the feeling of coming back to the phone itself, back to that little glowing slot machine of desire, and realising how quickly it starts feeding you again.

So when I look at where this is going, I don’t really see a clean end. I see more of the same logic in smarter packaging: synthetic entertainment pushed through safer legal pipes, trained on cleaner deals, dressed up as innovation rather than slop. The crash, if it comes, probably won’t be one big cinematic explosion. It’ll be slower than that, more administrative, more litigious, with a thousand copyright fights, product closures and strategic pivots. But even that would tell us something important. Namely, that the fantasy of endless AI culture, free, frictionless and infinitely scalable, is already running into the old stubborn facts of money, labour, ownership and law. And thank Christ for that, because without some resistance, these systems would keep churning out thinner and thinner versions of culture until all that was left was the feed itself.